The robot apocalypse is nigh—or maybe it isn’t. For months now, one of the liveliest debates on the internet has concerned the increasing sophistication of and prospective world takeover by artificial intelligence, particularly as it relates to artistic pursuits where humans have always exerted sole sway. In the production of text, and even more so of images, platforms such as DALL-E and Stable Diffusion have demonstrated alarming skill at producing reasonable facsimiles of original content, churning out fake movie posters and fake landscape paintings based on nothing but a few text inputs.

Does this signal the beginning of the end for creativity as an anthropocentric endeavor? Apparently not, according to Jose Luis García del Castillo y López. Born in Spain and trained there as an architect, García del Castillo now serves as a lecturer at Harvard’s Graduate School of Design. His particular province: AI and its design-world potential. After a promising early-career stint as a designer with a large engineering firm, García del Castillo eventually made the jump into researching, writing, and teaching about design technology, following what can only be described as an intellectual epiphany. “I was at a conference in Barcelona,” he says, “and I said, ‘Screw everything.’ That was my coming-out-of-the-closet moment.”

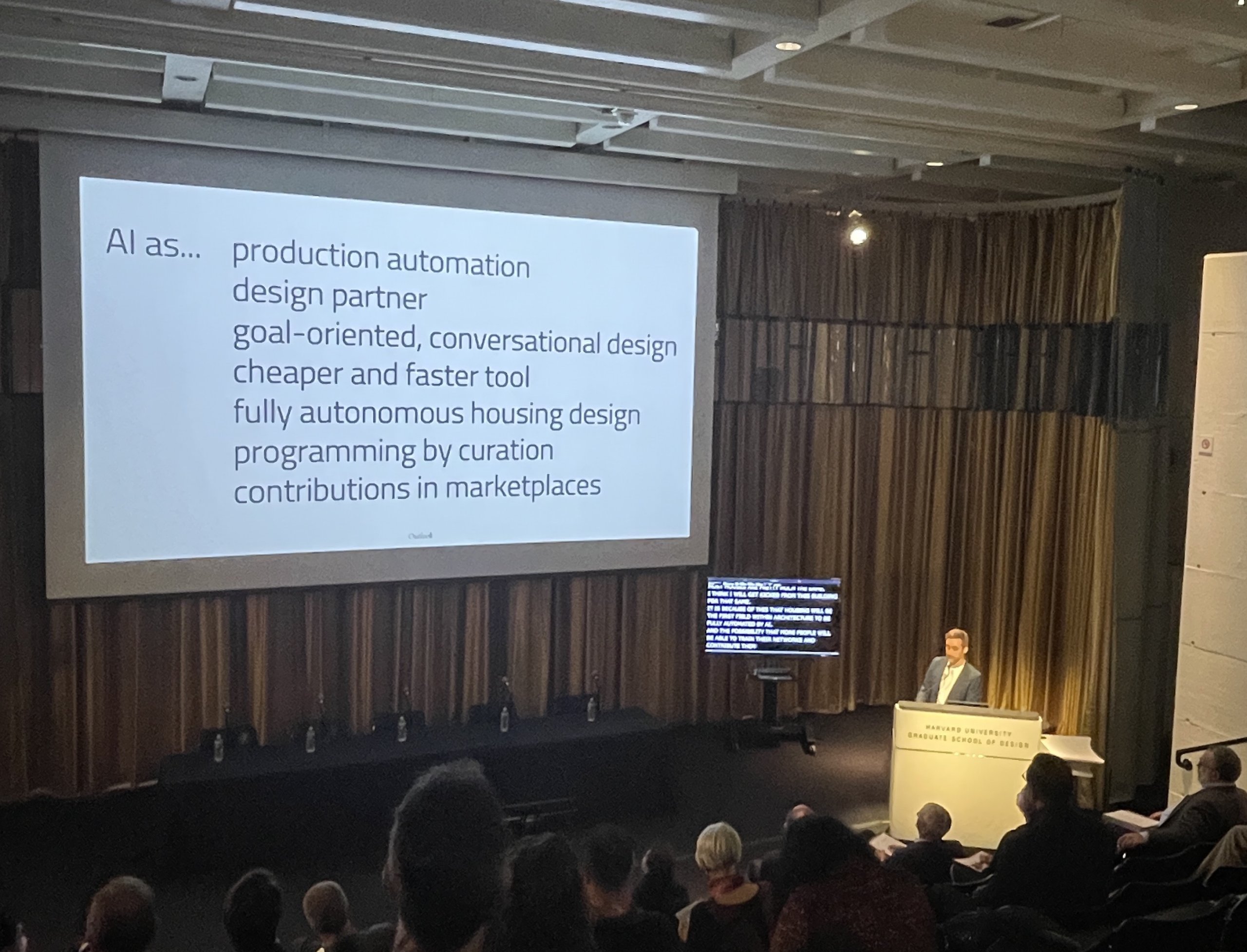

Since crossing over into the digital world, García del Castillo has advised corporate clients and written lengthy studies (most notably his 2019 Harvard doctoral thesis, “Enactive Robotics: An Action-State Model for Concurrent Machine Control”) on the use of technology in the conception and construction of buildings. And while he’s prepared to entertain worst-case scenarios, the primary gist of his pedagogical project to date has been resoundingly optimistic: don’t fear the bots. “Computer scientist Ted Nelson once said that computers are Rorschach tests,” says García del Castillo. “Whatever you see is what you project on them.” In García del Castillo view, the dire predictions regarding fully automated architects, interior designers, artists, and even—gasp—journalists are not only hyperbolic, but neglect the immense opportunity that AI actually presents, failing to recognize its generative power for design innovation.

What could that innovation look like? And how can designers harness AI to help produce it? In a recent conversation (conducted by phone—but we checked, he’s human), García del Castillo laid out his vision for the real-life future of synthetic architecture.

“When I started out, I was super into architecture: space, texture, light, all that jazz. But the profession as a day-to-day thing was not what I thought it was going to be—they sell you the dream of being a starchitect, but it’s not that nice. Also, I was just not that good a designer of buildings! I never really enjoyed the studio and I wasn’t happy.

Then I discovered a culture of people in the design field using computation and computer code for creative purposes. I got really excited about that. I started working weekends, learning stuff, doing seminars, and finally did a Master’s degree in technological innovation. By that point I was already 30. When I told my friends and family I wanted to switch careers, they said, ‘How are you going to give up on architecture now?’ But I wanted to reinvent myself, and so I made my way to Harvard.

My thesis had a very strong human-computer interaction component. What I was trying to explore was how to enhance human creativity by working in partnership with digital agents, and seeing how those agents might make us more expressive. Then, about five years ago, the whole field of AI exploded; these neural networks started coming out, creating images and creating text, and I saw an opportunity to continue that partnership project with simulated robots.

Most robots are pretty subservient, but neural networks have this uncertainty component—which is fascinating, because if you want to enhance creativity, you want to partner with something that sparks curiosity. Some degree of unexpectedness enriches you, and that’s embedded in how these AI networks work.

What AI is giving us is just one more way to do design. You can design in a void, and whatever you come up with is whatever you find in your own mind. Or you can do it in a team with other humans—brainstorm and share ideas. With AI, it’s pretty similar to the latter. With these neural networks, you write text and it gives you an image. You can use that image just as it is—or it can be an inspiration, something to help you create your own design.

Let’s say I have a brief to design a house in the real world, with some basic parameters: I know my site is this cornfield with a mountain over there, a lake over here. I start punching in prompts—‘house,’ ‘view,’ ‘lake,’ ‘cornfield’—and get an image. I can then modify the prompts, put in a bigger house, get rid of the cornfield. It’s an iterative process, and as the designer continues refining it, they gain awareness of what they actually want. In a way, it’s not that different from going to a therapist.

With any innovation, there’s always a similar pattern. A new technology comes in, and there are two ways to approach it. The first is: Can it help us do what we are doing better, faster, cheaper? Then there are people who ask instead, ‘What is inherent in this technology?’ It’s like when steel or concrete became a popular material, and façades no longer had to be two-foot-thick brick. Of course, when cast iron was developed, the first thing people did was cast it in Doric styles—that’s the only language they had. It takes time to realize what a material wants to be.

With neural networks, there already is a kind of architectural language appearing in the images. I follow many of my colleagues doing AI, and you can see these stylistic trends beginning to emerge: There’s a level of organic-ness, a lot of color, and a level of what I would call ‘glitchy-ness.’ Neural networks don’t understand tectonic principles; something might look like a real arch, until you look closer and realize it’s defying gravity.

But that’s just because we’ve gotten so good at building things that are almost that way, creating these architectural gestures. It’s a bias that comes from the real world, not just from the images out there—and if you look at any of these online platforms, so much of the ‘architectural’ images are not made by architects at all, but by graphic artists. Everything tends to look organic, dreamlike, glitchy. Ultimately, as architects move into this field, I don’t think any of them will end up generating the snapshot of an image they get from AI. They won’t build the same thing, but will rather build on what it suggests to them.

There’s also a lot of utilitarian applications. When I was writing machine learning, all clients wanted to see was apartment and office layouts. They just wanted a button in Revit that would take all the plans and make the tiny adjustments to align top and bottom. So you’ll see lots of automation for menial, boring tasks. But the creative aspect is more complicated.

One thing we will eventually have is some kind of ethical framework, where you have to disclose details of what is and isn’t produced by AI. And there will be value, people paying extra premiums even, for things that are guaranteed to have been generated by a human. It’ll be like a USDA Organic seal. In journalism, for example, people will say, ‘Hey, there is value in having articles written by a human being!’ But that doesn’t mean we won’t see some AI journalists.

At the end of day, our new role as designers will be not just creating media to build as architecture, but curating the media that synthetic agents help us produce. We’ll be the judges of what’s good or not good, and of what we want to share with the world.”

This interview has been edited and condensed for clarity.